Closing the Gap

Part 1: Why AI Safety and AI Ethics Can't Talk to Each Other

In December 2025, Roytburg and Miller published “Mind the Gap! Pathways Towards Unifying AI Safety and Ethics Research,” and it’s basically a network analysis of the AI research landscape. What they found is something that most people in the field already kind of knew, but nobody had put numbers on: AI Safety and AI Ethics are two separate communities, and they don’t really talk to each other.

The numbers, though, are worse than the vibes suggested. 83.1% of collaboration stays within community boundaries. Five percent of papers account for 85% of whatever crosstalk exists... and the part that really got me: remove the top 1% of bridge authors, and the connectivity between the two communities drops to zero. Not “drops significantly.” Zero. Just completely disconnected.

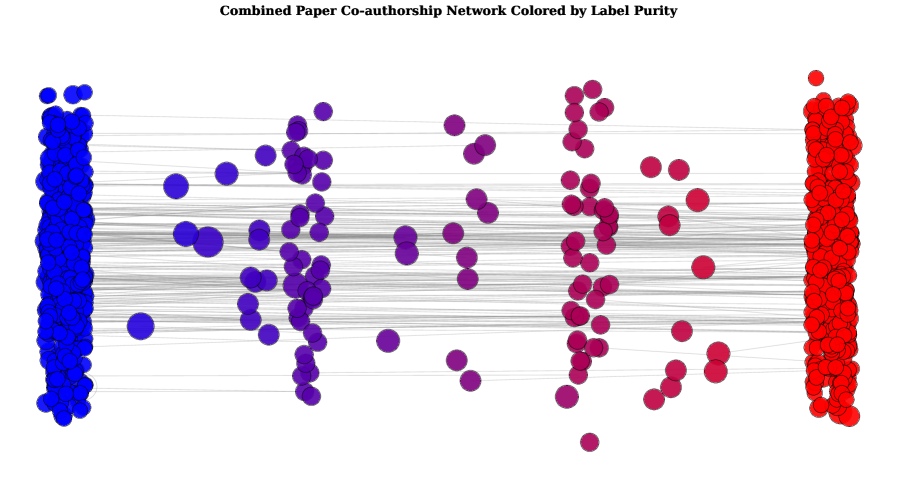

Their network visualization kind of tells you the whole story just by looking at it:

Figure 1: Roytburg & Miller, “Mind the Gap!” (arXiv:2512.10058), CC BY 4.0

Blue for Ethics, red for Safety. Two dense clusters, and then this sparse purple gap between them. I suspect the colour choices were not random...

But the analysis stops at the what. What it doesn’t answer is why.

And there’s something that makes the why kind of urgent. In 2024, Ren et al. 2024 looked at safety benchmarks and found that a bunch of them don’t actually measure safety; they measure capability, or they measure safety so narrowly that it’s nearly meaningless. Both communities should care about that. Both communities should want better tools. But they can’t work together on it, and the reasons for that go deeper than just “they disagree.”

The easy explanation, the one that kind of lets everyone off the hook, is that they just disagree. Safety researchers worry about existential risk from superintelligent systems, and ethics researchers worry about present-day harms from deployed systems. Different threat models, different timelines, different methodological traditions. There’s real truth in that, I’m not going to pretend there isn’t. These are genuinely different research programs with different starting assumptions. Some portion of that 83.1% probably reflects real intellectual distance.

Even granting that, though, no matter what the split is, it would be hard to argue that it doesn’t leave a gap. Likely a big one given the MASSIVE divide at the top of the funding food-chain that doesn’t seem to align nearly as neatly at the ground level of the researchers actually doing the work. For context, there’s a scientometric analysis of the Brazilian research community that found 35.2% of collaborations crossed disciplinary boundaries (Lopes et al., 2020). Now that’s a different comparison, all of Brazilian science versus two AI subcommunities, so you can’t map the numbers directly. But it gives you a sense of the baseline. A 64.8% within-discipline rate across fields with way less paradigmatic overlap than two communities that are both studying AI systems. And those fields still managed to build bridges. These two barely have footpaths.

So what accounts for the rest of it?

The divide maps almost perfectly onto a funding divide. And the funding divide maps onto a political divide. This isn’t a coincidence. The funding architecture creates the research architecture, the research architecture feeds back into the political architecture, and the whole thing gets worse because of a technical asymmetry where one community can actually look inside AI systems and the other one just... can’t. They can measure where the proverbial Plinko disc lands, but they can’t see the pegs or where the disc started.

That’s not a disagreement. That’s a dependency. And dependencies come at a cost.

Follow the Money

If you want to understand why the divide keeps going, you kind of have to start where most things start... by following the money.

The Safety Side

AI Safety research comes from a surprisingly small number of people. Total external funding in 2024 was roughly $110-130M globally, and one funder, Open Philanthropy (now Coefficient Giving), Dustin Moskovitz’s foundation, accounted for about 60% of that. So 50-63M in a single year, depending on what you count. One guy’s money. And for context, their 2025 grantmaking is on track to exceed $130M, more than doubling the previous year. So the concentration isn’t shrinking, it’s getting worse.

Second largest is Jaan Tallinn’s Survival and Flourishing Fund, about $152M since 2019. Tallinn co-founded Skype, his net worth is around $900M. After that, you’ve got the Long-Term Future Fund, which is basically a regranting vehicle where roughly half the money comes from Open Phil anyway. Pass-through with extra steps.

The intellectual family tree is well-documented: Bostrom’s Superintelligence formalized the existential risk frame, Yudkowsky and LessWrong built the conceptual infrastructure, EA provided the moral framework, and Open Philanthropy turned it into a grantmaking pipeline. Roytburg and Miller trace this genealogy explicitly, noting that the field’s trajectory has been “heavily influenced by Effective Altruism.” I mean, yeah, of course it was.

The institutional homes are concentrated: MIRI, Redwood Research, Apollo Research, METR, ARC, plus internal safety teams at Anthropic and DeepMind. Mostly Bay Area and London. The technical depth is real. Interpretability tools, formal verification, red-teaming frameworks, things like that. Almost entirely funded by two people’s money. Two guys. That’s the whole supply chain.

The Ethics Side

AI Ethics funding has historically been a lot more fragmented. Universities, government agencies, and progressive foundations each giving smaller amounts across a wider range of institutions. No single funder dominated. It was just kind of, you know, spread around.

That changed in October 2025 when ten progressive foundations announced the Humanity AI coalition. $500M over five years, co-chaired by Omidyar Network and MacArthur Foundation. Ford, Lumina, Kapor, Mellon, Mozilla, Siegel Family Endowment, Packard. Managed by Rockefeller Philanthropy Advisors.

$500M is a big number. About four times Open Philanthropy’s annual AI safety spend. But look at the five focus areas, democracy, education, humanities and culture, labor and economy, and security, and you can tell what it’s actually for. None of them mention interpretability. None mention alignment research. None mention evaluation methodology or safety tooling of any kind. It’s just not what they’re buying.

The existing institutional landscape reflects this. DAIR Institute, founded by Timnit Gebru after her departure from Google, launched with $3.7M and focuses on AI harms research. AI Now Institute studies the social implications of AI systems. Algorithmic Justice League works on bias in computer vision. FAccT conferences publish on fairness, accountability, and transparency. Important work, all of it, but with a few exceptions, work that operates on the outputs of AI systems rather than their internals. It’s a bit like being a restaurant critic who’s never been allowed in the kitchen, you can describe what came out wrong, but you can’t actually intervene in the cooking.

The Pattern

Lay it side by side, and the pattern is hard to miss. Tech wealth on one side, progressive philanthropy on the other. Safety researchers often end up employed by the labs they study, which is both an advantage and a problem. Like a building inspector who gets hired by the construction company. Maybe they’re still honest... but you’d feel a little bit better about it if they weren’t on the payroll. Ethics researchers are more likely to be adversarial toward the labs, and that means less access, fewer resources, and no ability to build the tools they’d need to make their critiques harder to dismiss. You can see how this becomes a self-reinforcing issue, where the less you can do technically, the less credible you look to the technical funders.

The funding sources aren’t just different in amount. They’re different in kind. Different theories of change, different definitions of success, different incentive structures for what even counts as legible progress. A safety researcher who publishes an interpretability result is legible to Open Philanthropy. An ethics researcher who publishes a fairness audit is legible to MacArthur. A researcher who tries to do both, wait, actually, that researcher basically doesn’t exist, and there’s a reason for that.

Someone trying to do both has to explain themselves to two communities that can’t talk to each other about what good work looks like. And nobody wants to be the person who has to translate between two groups that aren’t really listening. So you don’t try. You pick a lane.

Look, corporate R&D budgets aren’t public, individual Humanity AI commitments aren’t broken out, and federal funding crosses both domains without clean categorization. The numbers get messy. But the structural story doesn’t depend on exact dollar amounts. Two ideologically coherent funding ecosystems produce two research communities that are basically walled off from each other. That’s exactly what Roytburg and Miller found.

The Arguments That Pay for Themselves

Roytburg and Miller identify two recurring intellectual tensions between the communities. Both are real disagreements. Both are also, and I think this is the part people don’t want to say out loud, really effective fundraising pitches.

The first is the distraction argument. Ethics researchers argue that Safety’s focus on speculative existential risk diverts finite resources, talent, funding, and public attention away from documented harms hitting marginalized communities right now. Timnit Gebru’s widely-cited Wired critique framed the entire EA-to-safety pipeline as an ideologically motivated redirection of resources, explicitly naming the funding chain from tech billionaires through EA institutions to alignment labs. Not subtle.

The second is the scoping argument. Safety researchers and some sympathetic critics within ethics itself argue that a lot of what comes out of the ethics community just doesn’t have enough technical specificity to actually do anything. You can call for “justice” or “inclusivity,” and those things matter in principle, but it’s hard to formalize them into something that changes how a model is trained. Munn’s 2023 paper “The uselessness of AI ethics” made the case pretty bluntly: ethics recommendations often don’t translate into interventions that engineers can actually implement. When that critique lands, it lands hard, because it’s pointing at a real gap between what people want and what they’ve built a mechanism to get.

Both arguments have merit. Genuinely. But this is where the funding structure starts to matter in a way that’s kind of hard to see until you’re looking for it.

When an ethics researcher argues that x-risk focus is diverting resources from present harms, they’re making an intellectual point. They are. But they’re also, whether they intend to or not, making a case to progressive funders for why their work deserves the next grant. The argument is legible to MacArthur. It’s legible to the Humanity AI coalition. It reinforces the identity and urgency of the ethics community in exactly the terms their donor base responds to. That’s not me saying it’s cynical, it’s me saying the incentive structure is just sitting right there, doing its job. When a safety researcher dismisses ethics work as technically unserious, they’re also performing legibility for Open Philanthropy’s grantmaking criteria. Technical rigor, formal methods, measurable alignment progress, those are the outputs that EA-adjacent funders reward. So of course that’s what gets produced.

And I think most people making these arguments genuinely believe them. That’s actually what makes it so hard to see. The problem isn’t that anyone is lying. It’s that two funding ecosystems have created two incentive buckets where maintaining the critique of the other side is just more rewarded than resolving it. A safety researcher who publishes a paper engaging seriously with fairness concerns doesn’t get extra credit from Open Phil. The work doesn’t map onto their 21 research directions. An ethics researcher who collaborates with an alignment lab risks their credibility with a donor base that views those labs as part of the problem.

So you end up with this kind of intellectual trench warfare where each side’s strongest arguments against the other happen to also be their strongest arguments for their own funding. It’s akin to two people in a custody battle where being right about the other person’s flaws is also how you win. The arguments don’t need to be manufactured. They just need to be selected for. The funding architecture does the selecting.

Okay, there’s a case study here that I think is really revealing. Ren et al.’s 2024 paper on “safetywashing” documented something both communities should care about equally: safety benchmarks that actually measure model capability rather than safety. That’s a technical finding with implications for everyone. You’d think it would be common ground, right?

But the two communities metabolized it completely differently. Ethics researchers cited it as evidence that the entire safety paradigm is performative, like “see, even their own benchmarks don’t measure what they claim.” Safety researchers cited it as evidence that better benchmarks are needed: “See, we need more rigorous evaluation methodology.” Same paper, same finding, absorbed into whatever story each side was already telling. The paper became ammunition for both sides rather than, you know, a thing they could actually work on together. And notice what neither side’s version produces: a joint research program to build better benchmarks. That would require funding that rewards cross-pollination. That funding doesn’t exist.

Which brings us to the bridge authors, the people Roytburg and Miller identified as the only thing connecting the two communities. The top 1% of authors by degree centrality control 58% of all shortest bridge paths. The top 5% control 88.1%. And even with those bridge authors in place, the connectivity is worse than random. After five hops through the coauthorship network, only 16.9% of safety-ethics author pairs are connected. The expected rate if collaboration were random? 21.5%. So the two communities aren’t just separated, they’re more separated than they would be if nobody were trying at all, because the clustering within each side is so strong that it basically suppresses cross-community links. The in-group gravity is eating the bridge.

So, who funds the bridge authors? Or I guess more precisely, who funds bridge work? And the answer, structurally, is kind of nobody. Think about a researcher who wants to study how language models encode racial bias using mechanistic interpretability methods. This is exactly the kind of work that would bridge the divide. You’re taking a safety-side tool, interpretability, and applying it to an ethics-side concern, bias. It’s technically rigorous, it’s socially relevant, it’s the thing everyone says they want more of.

Now, try to fund it.

Open Philanthropy’s 2025 RFP lists 21 research directions. A proposal framed around racial bias and distributional justice doesn’t match the language. Humanity AI’s five focus areas don’t match either. The technical methodology is just complete gibberish to a program officer evaluating for social impact. You’re talking about activation vectors, and they want to know about community outcomes.

So the proposal falls between both stools. Too justice-oriented for the safety funders. Too technical for the ethics funders. Not because either side objects to the work in principle, but because neither side’s grantmaking infrastructure is built to evaluate it. The forms don’t have the right boxes. The reviewers don’t have the right background. It’s not a conspiracy, it’s just bureaucratic topology. Researchers who want to do it basically have to fund it sideways, get a safety grant and sneak in the fairness angle, or get an ethics grant and downplay the technical methodology. That’s not a sustainable system. That’s researchers running a con on their own grant applications just to do the right work.

When the Money Gets Political

Everything I’ve been talking about up to this point is implicitly political. Two funding ecosystems with different ideological DNA naturally produce two research communities. But in 2025 and 2026, the subtext became the text.

In February 2026, Anthropic donated $20M to Public First Action, a pair of Super PACs, one Democratic, one Republican, and OpenSecrets described them as “tied to Anthropic insiders and the effective altruism movement.” Anthropic’s backers had already given $174M to Democratic candidates by September 2025. So the EA-to-safety funding pipeline that pays for alignment research is now also paying for electoral candidates who’ll vote for safety-oriented AI regulation. Same money, same pipe, new outlet.

On the other side, Leading the Future, a Super PAC backed by a16z and basically adjacent to OpenAI’s investor network, has a $125M fundraising target. Their position isn’t subtle. Less regulation, faster scaling, fewer guardrails. It’s the accelerationist lobby but expressed as direct political spending, you know, just writing checks instead of writing blog posts.

And then Encode Justice, a progressive advocacy organization pushing for AI regulation from a civil rights angle, got subpoenaed by OpenAI in the context of the Musk lawsuit, and the demands targeted the organization’s communications about SB 53 and its funding sources during active legislative negotiations. Which is, I don’t know, it’s a lot.

The money lines are pretty clean if you trace them. EA-adjacent tech wealth flows through Open Philanthropy to alignment researchers to pro-regulation Super PACs. Progressive philanthropy flows through Humanity AI to ethics researchers to civil rights advocacy organizations. And a16z-adjacent venture capital flows to accelerationist Super PACs that want to dismantle the regulatory conversation entirely. Three pipelines, three political projects, barely any overlap.

I want to be careful here because this is a structural observation, not a knock on individual researchers. The people doing alignment work or fairness audits are, overwhelmingly, doing it because they believe in it. They don’t control where their funders spend political money, and most of them are several rungs removed from those decisions. Blaming individuals for systemic incentives is exactly the kind of dodge that keeps structural problems from getting fixed. Might as well be yelling at the barista because the CEO did something terrible, you’re technically inside the same building but you’re pointing at the wrong floor.

The safety community and the ethics community agree on more than they disagree about. They both believe AI systems need guardrails. They both oppose unconstrained scaling without oversight. They both want regulation. On the fundamental question of whether or not powerful AI systems should be subject to external accountability, they’re generally on the same side.

Their actual opponent is the $125M accelerationist lobby that wants no meaningful regulation at all. And that lobby benefits directly from the schism.

When safety and ethics researchers spend their political capital arguing about whether existential risk or present-day bias is the “real” problem, the accelerationist position wins by default. They don’t need to defeat both sides. They just need to wait for them to exhaust each other. It’s like watching two goalkeepers argue about which end of the field to defend while someone walks the ball into the empty net.

This isn’t a conspiracy, by the way. It’s a coordination failure that happens to have a beneficiary. The safety-ethics divide is, functionally, a gift to the people who want no guardrails at all. Not because anyone designed it that way, but because the funding architecture makes coordination so expensive that the default outcome is fragmentation. And fragmentation, in a political context, means the loudest and best-funded position wins. Right now, that position has $125M and a simple message: get out of the way.

There’s a complication worth naming, though. Not everyone on the ethics side accepts the “natural allies” framing. A meaningful faction, Gebru among them, views the safety community’s regulatory agenda as itself captured. Capability evaluations and safety frameworks that basically give frontier labs a “we passed the test” shield while present-day harms continue unaddressed. For these researchers, the problem isn’t that the two communities can’t coordinate on regulation. It’s that the regulation itself has been shaped by one side’s priorities. And I mean, that’s not a crazy position to hold.

But it gets thorny, because you’ve got to ask: does the ethics community actually want independent technical capacity, or do they see it as co-optation? Like, is building better tools just another way of saying “your work only counts if it looks like our work”? If some ethics researchers think that pushing for technical infrastructure is itself a form of capture, then talking about infrastructure doesn’t solve anything. It makes the problem worse. So that’s a real objection, and I don’t think it gets enough airtime.

I think the answer is more nuanced than just “they want it” or “they don’t.” Some of them don’t want it because they think technical tools are inherently a safety-community frame. Fair enough. But the ones who actually do want technical capacity, who’ve been banging on the door for it, they want a version that doesn’t come with strings attached. They want tools that let them ask their own questions, study what they think matters, and publish findings on their own terms without having to route through safety-defined benchmarks or ask permission from labs they distrust. And look, that’s actually achievable. It just means stepping back from the idea that there’s one right way to do technical work on AI systems.

Because the ethics researchers who want nothing to do with the safety community’s frameworks are the ones who most need independent infrastructure. They don’t have to collaborate if they don’t want to. They don’t have to seek permission. They can build their own instruments, study what they think matters, publish results, and let the work speak for itself. The most skeptical faction of the ethics community should be the easiest sell for this proposal, precisely because it’s the one path that doesn’t require them to trust the people they distrust. You don’t need to hold hands with someone to walk in the same direction.

The Tool Gap Is the Power Gap

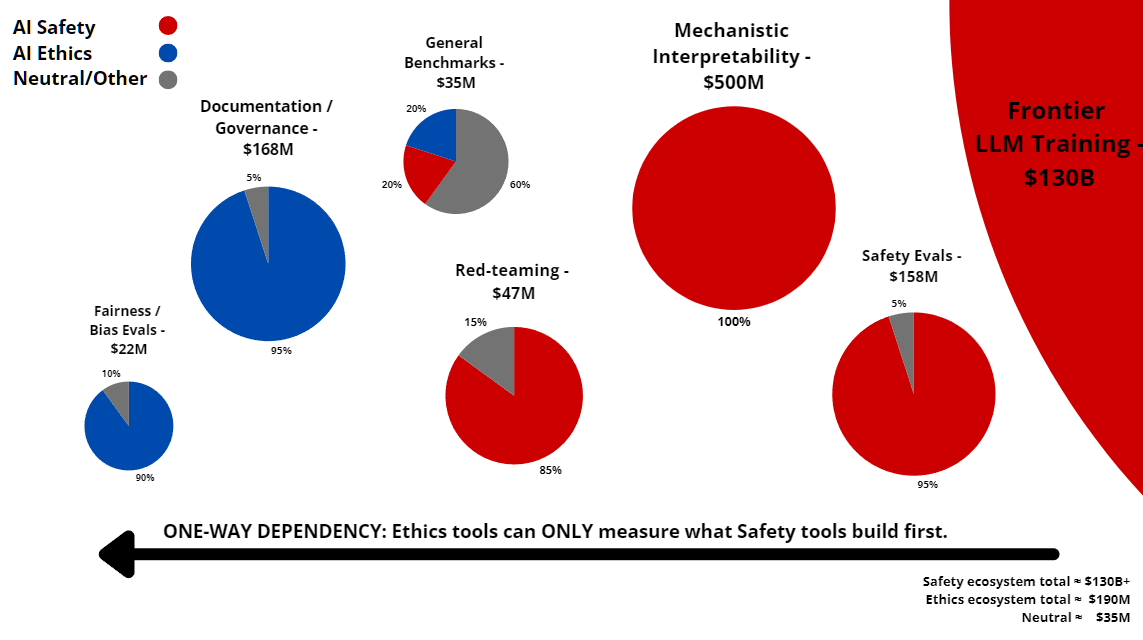

Okay, so before I get into what could actually be done about any of this, that’s Part 2, just stick the two ecosystems next to each other and look at what you’ve got.

The safety ecosystem has real technical depth. Interpretability, formal verification, red-teaming, model evaluations, that stuff is legitimate and sophisticated. But the funding is extremely concentrated, something like 60% from a single source, and the institutions are clustered, maybe 10 core orgs mostly in the Bay Area and London. The demographics track with EA demographics, which means not a diverse group. And the political influence is direct, the kind that shows up as $20M Super PAC donations and $174M flowing to candidates.

The ethics ecosystem is pretty much the inverse. The technical depth is lower, bias audits, fairness benchmarks, documentation frameworks, policy analysis, that kind of work. Important, but not the same register as interpretability research. But institutional diversity is way higher, universities, NGOs, advocacy orgs, government agencies, stuff happening globally. Better demographics. Funding used to be fragmented, but it’s consolidating through Humanity AI. And the political influence is indirect, advocacy organizations, foundation influence, regulatory testimony, not direct spending.

So you’ve got a tradeoff sitting right there. One side has depth and access, but narrow everything else. The other side has breadth and diversity, but can’t get in the door. Most of those differences are differences in degree. One side has more money, one side has more institutions, whatever. But access to model internals, that’s a difference in kind. Safety researchers work inside frontier labs. Ethics researchers critique from the outside. It’s not “we have less of the thing you have.” It’s “we don’t have the thing at all.”

The infrastructure picture makes this pretty clear:

Figure 2: The tooling/access landscape, as of March 2026

The ethics side’s tools can only measure what the safety side’s tools were built to find. That’s the whole problem.

And look where the new money is going. Humanity AI’s $500M gets distributed across five focus areas: democracy, education, humanities and culture, labor and economy, and security. Not one of those mentions interpretability, alignment research, evaluation methodology, or any form of technical tooling. None. $500M spent entirely on output-level analysis is not going to close the technical gap. It’s basically going to produce more of what the ethics ecosystem already does well, at a larger scale, with the same structural dependency on the safety ecosystem’s tools, access, and framing.

So here are the three gaps that keep the divide alive.

The funding gap: two ideologically (and implicitly, if not explicitly politically) distinct donor ecosystems have produced two structurally isolated research communities, and there’s no reliable mechanism for funding work that sits between them.

The political gap: the same money that finances the academic divide now finances electoral politics directly, and a $125M accelerationist lobby benefits from the fact that safety and ethics researchers can’t coordinate on their substantial shared interests.

The tool gap: every interpretability method, every red-teaming framework, every frontier model evaluation benchmark was built by the safety ecosystem. The ethics community can critique outputs. It cannot examine internals. It depends entirely on the tools, access, and framing of the community it’s supposed to be holding accountable.

The first two gaps matter. The third is the one that makes them self-perpetuating.

Close the tool gap and the other two start to close on their own. Researchers who can independently verify each other’s work don’t need to take each other’s claims on faith. Funders who can see rigorous results from both paradigms don’t need to pick a tribe. The bridge authors wouldn’t be working against their own incentives anymore. Right now, being a bridge person is kind of a career liability. It doesn’t have to be.

There’s a practical window. The Humanity AI coalition’s research agenda is being set now. RFPs haven’t dropped. The executive director hasn’t been hired. Grant categories aren’t locked in. Once they are, redirecting established funding pipelines gets harder with every grant cycle. This isn’t some apocalyptic deadline. It’s a funding cycle. But funding cycles set trajectories, and trajectories are expensive to change. Anyone who’s ever tried to pivot an established grant program knows this.

$500M spent entirely on policy analysis while the technical infrastructure remains a monopoly is just going to reproduce the divide at a bigger budget. More money, same dependency. That’s not closing the gap. That’s wallpapering over a load-bearing crack.

In Part 2, I’ll get into what independent technical infrastructure could actually look like, what it could cost, and why there’s a window right now that probably won’t stay open much longer.